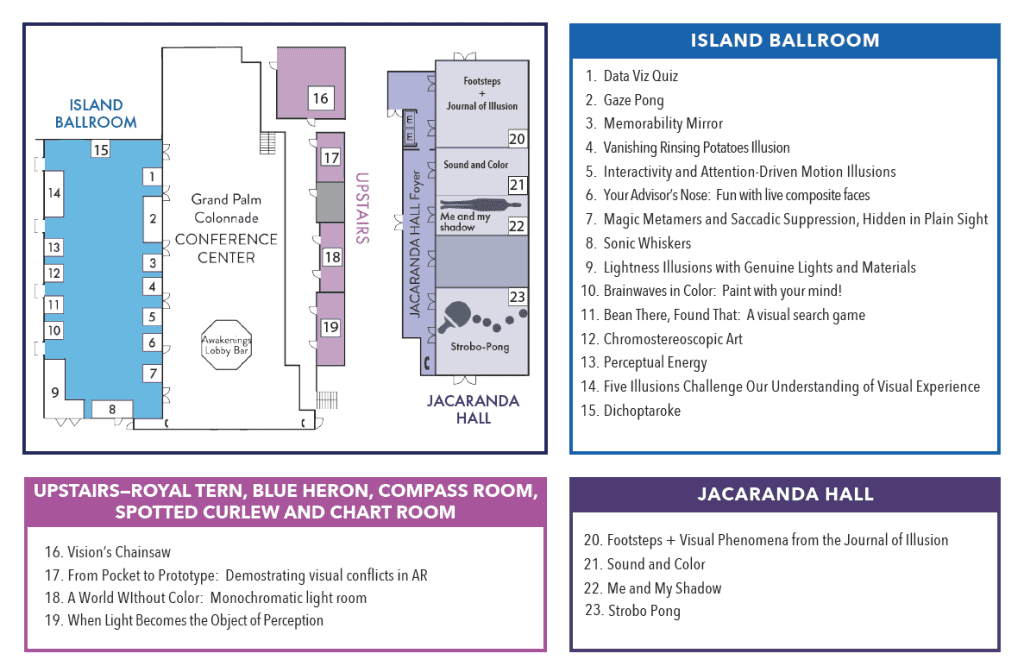

Monday, May 18, 2026, 7:00 – 10:00 pm, Talk Room 1-2 and various rooms

Please join us Monday evening for the 22nd VSS Demo Night, a spectacular night of imaginative demos solicited from VSS members. The demos highlight the important role of visual displays in vision research and education.

This year’s Demo Night will be organized and curated by Daw-An Wu, Caltech; Peter Kohler, York University; Anna Kosovicheva, University of Toronto Mississauga; and Gideon Caplovitz, University of Nevada, Reno.

Demos are free to view for all registered VSS attendees and their families and guests.

The following demos will be presented from 7:00 to 10:00 pm, in Talk Rooms 1- 2, Jacaranda Hall, Snowy Egret and Blue Heron

Memorability Mirror

Hidekazu Nagamura1, Nakwon Rim2, Wilma A. Bainbridge2, Shunichi Kasahara1

1Sony Computer Science Laboratories, Inc., 2University of Chicago

Ever wondered if your own face could be made more—or less—memorable? Our system offers a controllable interface that lets you augment or diminish your facial memorability at will. Whether you want to be an unforgettable icon or a face in the crowd, the power is now in your hands.

Your advisor’s nose: Fun with live composite faces

Anna Kosovicheva, Jiali Song, Silvia Guidi, Zainab Haseeb

University of Toronto

Have you ever wondered what your face looks like mixed with someone else’s? Create real-life composite faces with this classic museum exhibit! In this ultimate social “mixer”, two people sit across from each other, facing a set of mirrors, creating a composite face. Who nose what the result will be!

Me and my shadow

Sae Kaneko, Stuart Anstis, UC San Diego

At sunset, your shadow can be more than 10 times longer than your own height. This long shadow may appear to have a disproportionally small head and long legs, but the disproportion is not in the physical shape of the shadow. Experience this curious phenomenon with our 30 ft “shadows” on the floor and explore it from all angles.

Sonic Whiskers

Cesare Parise, University of Liverpool

Sonic Whiskers is an interactive sensory-substitution demo inspired by active whisking. Visitors will experience how sparse audiotactile feedback can support awareness of nearby objects and space, enabling object detection, reaching, and navigation with a proof-of-concept system.

Interactivity Illusions and Attention-Driven Motion Illusions

Shengjie Zheng, Shisuke Shimojo, Björn Keyser, Caltech

The motion illusions reveal how action and attention shape perception. In dynamic noise, moving your hand creates magnetic attraction or repulsion, and moving the screen makes noise appear stable behind it. In the Stepping Feet illusion, attending to the target makes motion appear smoother and more continuous.

Gaze Pong

Kurt Debono, Marcus Johnson, SR-Research Ltd.

Experience a gaze-contingent classic Pong duel. Challenge a colleague using gaze-controlled paddles in this fast-paced match, powered by high-speed synchronisation between two eye trackers. Go head-to-head in an ocular motor showdown where your eyes are the controller.

Strobo Pong

VSS Staff

Experience the chaos of table tennis under conditions of motion perception breakdown. Recreate a live demo of the original flash-lag illusion (but please, no smoking). Note for the photosensitive: The room will be illuminated solely by a flashing strobe light.

Dichoptaroke

Brooke Lim, McGill University

This interactive karaoke demo displays the lyrics of vision-science–themed parody songs in red, cyan, and black text. Viewed through anaglyph 3D glasses, lyrics are split between the eyes to promote binocular integration, showcasing a playful, social approach to binocular-based amblyopia rehabilitation.

Brainwaves in color: Paint with your mind!

Santoshi Ramachandran, Brett Bays – Brain Vision LLC

In this interactive neuroscience demo one participant wears a VR headset and paints in a 3D virtual space. Simultaneously, a second participant wears a 7-channel EEG cap and uses their brainwaves and subtle facial movements (blinks, jaw clench, etc) to control the colors, intensity, and effects of the painting. The result is a living, interactive canvas that responds directly to brain activity. Participants receive immediate feedback on how their mental state influences the art, making complex neuroscience concepts accessible, fun, and visually striking.

Bean There, Found That: A Visual Search Game

Santoshi Ramachandran, Brett Bays – Brain Vision LLC

In this interactive neuroscience challenge, participants search for hidden lips within images of coffee beans. Each participant has 30 seconds to find as many lips as possible, competing to be the best. EEG sensors can simultaneously record brain activity during the search. This demonstration provides a hands-on look at how scientists measure attention and object recognition in real time, revealing how the visual system and brain work together to identify targets in your environment.

Vision’s Chainsaw

Patrick Cavanagh, Glendon College / Dartmouth College; Stuart Anstis, UCSD

Moving frames can displace the apparent location of brief flashes presented at the moment the frame changes direction. We use this here to attempt a live dismemberment of the human body. We invite observers to step up and be severed.

Vanishing Rinsing Potatoes Effect

Christopher Tyler, SKERI

When swirling new potatoes (or other spherical objects) in a colander pan to rinse them, one or two seem to be missing, miraculously reappearing as they come to a halt. It is not simply motion blur, because several are seen discretely while in motion, while others seem to disappear.

The Footsteps Illusion

Akiyoshi Kitaoka, Ritsumeikan University; Stuart Anstis, UC San Diego; Yuki Kobayashi, CiNet

We demonstrate variants of the footsteps illusion (Anstis 2001). Explanations include:

1. A difference in perceived speed depending on edge contrast (Thompson 1982).

2. An extinction effect (Wade 1990), leading to position or motion captures.

3. Related illusions include the kick-forward and -back illusions and the driving-on-a-bumpy-road illusion.

Perceptual Energy

Mykola Haleta; Gideon Caplovitz, University of Nevada Reno

Certain visual images and videos seem to challenge the visual system’s ability to stably represent what is being looked at and in doing so appear to cause multiple perceptual processes to become highly energetic. Come see Artworks created by Graphic Designer Mykola Haleta and experience perceptually energetic images for yourself!

Magic Metamers and Saccadic Suppression, Hidden in Plain Sight

Peter April, Jean-Francois Hamelin, Dr. Lindsey Fraser

VPixx Technologies

Can visual information be hidden in plain sight? We use our 6-primary projector to display a secret message concealed using chromatic metamers. Look through a filter to reveal the hidden message! Back by popular demand, the PROPixx 1440Hz projector demonstrates text which is only visible during your saccades. The player with the fastest word sighting wins a drink ticket!

From Pocket to Prototype: Demonstrating Visual Conflicts in AR

Daniel Spiegel, Meta Reality Labs

Binocular augmented reality introduces perceptual challenges—such as the vergence-accommodation conflict (VAC) and the less-explored stereopsis-occlusion conflict (STOC)—that impact visual performance and comfort. This demo showcases VAC and STOC through a spectrum of tools, from $15 smartphone simulators to actual headsets, offering intuitive, hands-on experiences for any audience.

Chromostereoscopic Art

Bernardo MXKT; Gideon Caplovitz, University of Nevada Reno

Come experience Stereoscopic Vision as never before through the Art of Bernardo MXKT. Discuss the principles of Chromostereopsis, the factors that maximize the quality of the experience and applications to the study of depth perception.

Five Illusions Challenge Our Understanding of Visual Experience

Paul Linton, Columbia University

Five illusions suggest our visual experience is much simpler than we previously thought. We show illusions that (1) Color Constancy, (2) Size Constancy, and (3) Depth Constancy can operate without fundamentally affecting our visual experience. We also show that (4) the Hollow-Face illusion does not appear to affect stereo vision. Finally, we show that (5) Visual Scale (apparent size) relies on distortions of 3D shape, rather than distance perception. For explanations of all of these illusions, please see https://FiveIllusions.Github.io

Data Viz Quiz

Jeremy Wilmer, Wellesley College

Data Viz Quiz: Match your wits against some surprisingly tricky graph-interpretation phenomena and get immediate feedback pointing the way to best practices in graph design.

A delightful gallimaufry! Astonishing illusions and strange phenomena, demonstrated with genuine lights and materials according to the latest scientific principles

Richard Murray, Anne Peiris, York University; Emma Neto, Farhan Abdul Vaheed, Jamie G. E. Cochrane, Pranjal Patel, McMaster University

Lightness illusions are most striking when created with real lights and surfaces. Here we show an array of lightness phenomena, constructed using a range of materials, from LEGO and painted blocks to computer-controlled lab apparatus. We introduce a new illusion, the ‘fishbone’, that evokes strong individual differences in lightness perception.

When Light Becomes the Object of Perception

Seth Riskin, M.I.T.

The demo links (1) projective geometry—privileged viewpoint/center of projection and collapse of structure; (2) ecological optics/light fields—systematic view dependence of the optic array; and (3) perceptual inference—how the visual system assigns structure to surfaces versus illumination, and what occurs when priors about perspective and lighting are violated.

A world without color: Monochromatic light room

Rosa Lafer-Sousa, Helen E. Feibes2, Spencer R. Loggia2,3

1UW-Madison, 2National Eye Institute, National Institutes of Health; 3Department of Neuroscience, Brown University

We provide an immersive experience of the world without color using monochromatic low pressure sodium light (589 nm). The demo highlights the myriad benefits color provides in natural vision. It also showcases a surprising finding: That faces, and only faces, provoke a paradoxical memory color, appearing greenish (Hassantash et al, 2019).

Sound & Color

Sound & Color, a 15-minute documentary, follows synesthesia artist Sarah Kraning as she collaborates with our film team to animate her visual experience of sound.

Visual Phenomena from the Journal of Illusion

Alex Gokan American University; Yuki Kobayashi, CiNet Osaka; Akiyoshi Kitaoka, Ritsumeikan University; Arthur Shapiro, American University; Stuart Anstis, UCSD

The Journal of Illusion has been in operation since 2019 with Akiyoshi Kitaoka as founder and editor. Here we will present some phenomena that have been published in JoI since that time, including some illusions from the authors of this demo.